|

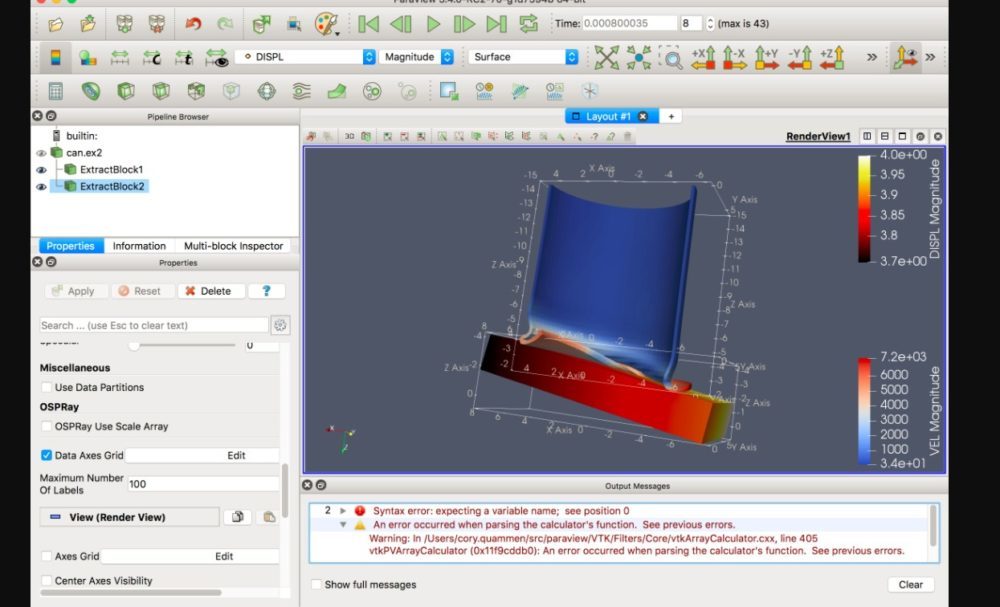

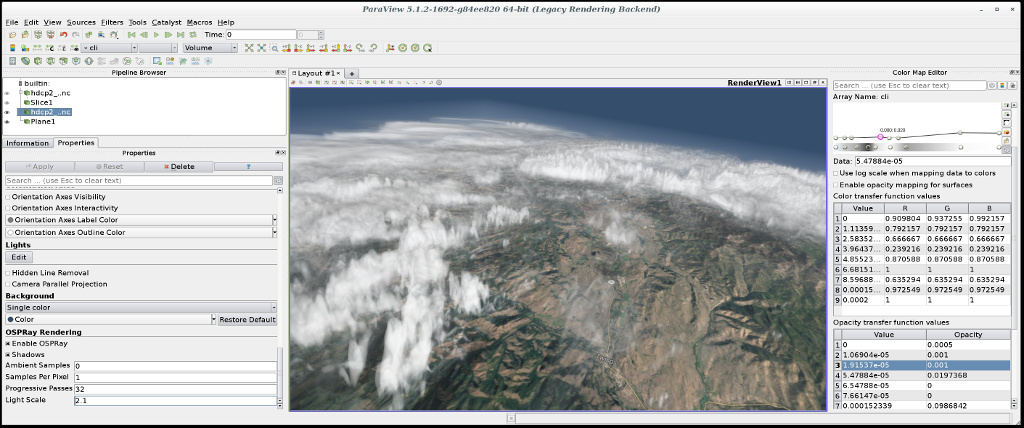

If we simply map a sphere with radius r into a cube to visualize the spherical shell data, the volume is almost doubled even if we could ignore the inner spherical void. 1 are varied thermal convection in spherical shells between two concentric spheres with radii \(r_i\) and \(r_o\) with different shell depths \(d=r_o-r_i\). However, such a cartesian mapping is impractical for data defined in a spherical shell, which is the geometry commonly used in the earth sciences. When a target field of physical quantity is distributed spaciously in all ( x, y, and z) directions, one could map the data onto the cartesian coordinate system before applying visualization in the cartesian grid. We note the speciality of the visualization of spherical geometry and the importance of Yin-Yang grid. 2015), and astrophysical simulations (Wongwathanarat et al. 2016), solar-dynamo simulations (Hotta et al. 2008 Tackley 2008), global circulation models of the atmosphere (Peng et al. 2010), which was the original motivation for this new grid system, mantle-convection simulation (Yoshida and Kageyama 2004 Kameyama et al. The Yin-Yang grid was applied to geodynamo simulation (Kageyama et al. The boundary values on each component are set through mutual interpolations according to the standard overset methodology (Chesshire and Henshaw 1990). It is a type of overset grid system that comprises two congruent component grids, Yin and Yang, which are combined to cover a spherical surface with partial overlaps. We developed a grid system, Yin-Yang grid, for spherical simulations (Kageyama and Sato 2004). The original VISMO adopts simulation data defined on a Cartesian coordinate system, whereas spherical geometry is essential in geoscientific simulations. VISMO has been used in various simulations, especially fluid turbulence simulations (Miura et al. All the visualization methods are implemented using the language with no additional libraries. The library, VISMO, is written in the Fortran 2003 language. One of the authors (N. O.) has developed a library with minimal in-situ visualization capabilities (Ohno and Ohtani 2014). Because various libraries, such as OpenGL, VTK, and OSMesa, are involved, it becomes difficult, if not impossible, to optimize them on newly developed supercomputers with special accelerators including vector processors. The OpenGL functions can be executed in a computing environment without graphics processing units (GPUs) using the Off-Screen Mesa (OSMesa) library. The OpenGL graphics library is used for hardware-accelerated rendering in the VTK. These applications have been developed based on the Visualization Tool Kit (VTK) (Schroeder et al. ParaView and VisIt have rich set of visualization capabilities. 2015) for ParaView, and libsim (Whitlock et al. Major application programs for general-purpose visualization are now equipped with in-situ libraries or tools, such as Catalyst (Ayachit et al.

In-situ visualization plays important roles in large-scale simulations. In addition to the dimensional reduction, one can apply compression algorithms based on arithmetic coding to further reduce the size of the image data. The data to be stored after the simulation with in-situ visualization are two-dimensional images, rather than three-dimensional floating-point numbers. It is a method to apply visualization, while the simulation is running.

If one tries to analyze multiple scalar fields or vector fields for multiple time steps, the output data would flood the disk system.Īnother style of visualization, called in-situ visualization, is garnering attention among simulation researchers (Ma et al. The required disk space for saving one scalar in those cases is 64 or 512 GB, respectively. It is not uncommon today to carry out simulations with a grid size of the order of \(2048^3\) or \(4096^3\).

Even for a simulation with a moderate grid size, say \(1024^3\), the post-hoc visualization requires 8 GB of disk space to save just one scalar field (in the double precision floating point numbers), for just one simulation time step. The size of the numerical data to be transferred and stored for the post-hoc visualization becomes unrealistically large as the scale of the simulation increases. Such a visualization style, called post-hoc visualization, would be difficult to apply in the near future. In large-scale geophysical simulations, visualization is commonly applied to numerical data saved in a disk system after performing simulation.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed